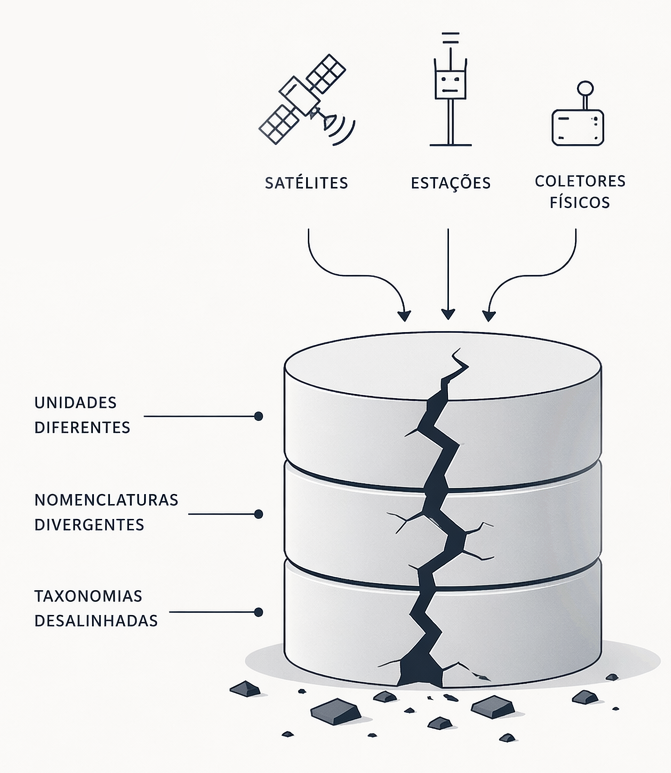

The perception that there was a critical problem in the way scientific data was being handled came directly from practice. Acting as an independent researcher and invited specialist in projects such as CAIPORA, I realized that the plurality of information sought by the groups — coming from satellites, physical collectors, and stations — was presented in completely different formats. Even with an organized grouping logic, there was no time left for people to stop and normalize this data; units did not match, and taxonomies were divergent.

Without dedicated governance for this process, I understood that the project was already born with an inconsistency in its basic information, which I call starting the work on a “cracked foundation”. It was this gap that motivated me to structure DataShipper, working on fronts that treat and normalize data to make its use easier for the academic team, ensuring that this methodology generates scalability across different technologies and ecosystems.

Below, I present an analysis of the challenges faced and how DataShipper seeks to solve them.

1. Identifying the “Cracked Foundation” at the Beginning of Research

The first major challenge of modern science is the fragmentation of sources. In large-scale projects, each scientist takes responsibility for their specialty, but the absence of centralized governance causes normalization to be neglected. The result is a fundamentally problematic information base even before scientific development begins.

What truly makes a difference for a project aiming for academic and social reach is not only basic organization, but a normalization logic that connects this data to the real world. Within DataShipper’s range of services and fronts, we work so that this normalization becomes the foundation that allows the academic team to focus on what matters, ensuring the integrity of the research from the start.

2. The “Leap of Faith”: Computational and Cognitive Obstacles

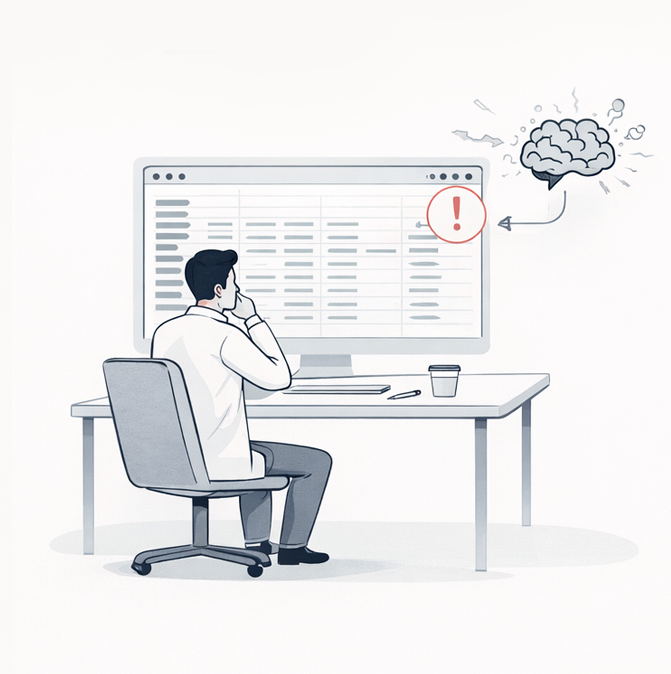

The transition from raw data to a concrete solution faces severe barriers, starting with the computational challenge of processing and storing terabytes of information. Added to this is the cognitive limit of interpreting raw data without interfaces that translate it efficiently.

This difficulty pushes the academic body toward validation based only on samples or “pieces,” resulting in a dangerous “leap of faith”. It is important to highlight that, although the scientific method guarantees the integrity of the final result, these small “leaps of faith” are nothing more than the choice to move forward with the latent risk of having to redo the work. The absence of a universal system forces the researcher to work for months only to discover, through an unexpected result, that the initial information was not accurate. DataShipper aims precisely to replace this risk with the ability to guarantee data integrity in advance.

3. The Pain of Coordination and the Risk of Rework

At the managerial level, the academic coordinator faces the challenge of balancing quality and speed while managing heterogeneous teams, from the multidisciplinary aspect to the fact that some projects include everyone from undergraduate research fellows to experienced PhDs. Validating what each scientist brings to the project requires dealing with raw data in order to understand its integrity, an exhausting task that often leads to rework. There is a feeling that the fundamental part of leadership, which is to promote the evolution of the group’s knowledge, competes with trivialities that end up taking on greater magnitude.

As one of its support fronts for coordination, DataShipper guarantees data integrity and normalization as a premise of the project. This is carried out through intelligent solutions (such as the “Data Bridge”) and a methodology for researcher integration and onboarding. This approach eliminates concern about whether the data is functional and prevents errors discovered too late from compromising the project. By ensuring that the team’s deliveries are integrated with one another, we increase confidence in the team and reduce the coordinator’s insecurity regarding the integrity of the applied scientific methodology.

4. Acting at the Root of the Problem: DataShipper’s Differential

The essence of our work is dealing with the radical nature of problems. We understand that if an inconsistency forces the researcher to go back and redo the work, the failure does not lie in the scientific discovery, but in the systematization and consistency of this data.

One of DataShipper’s fundamental fronts is to create mechanisms capable of keeping this data organized and standardized, restoring the confidence needed by the academic team. Our methodology ensures that the data is distributed in a normalized way and inserted into scalable technologies, allowing for dynamic, productive, and humanized consumption. This ensures that the research can evolve in the long term, connecting with other ecosystems. Working in parallel with the project team, we ensure that the data travels its path with integrity until it reaches society at the final point, making a real difference.